Every developer who has built a RAG system on complex documents knows this problem: Your agent retrieves the right section, finds the value it needs, and then hits a reference signal. “See Section 4.3.” “Refer to Appendix C, Table 7.” “As defined in Clause 14(b).” Four hops later, the full answer lives across three different parts of the document, none of which your retrieval pipeline connected.

Cross-refrencing is how humans read complex documents. It is not how RAG systems do it.

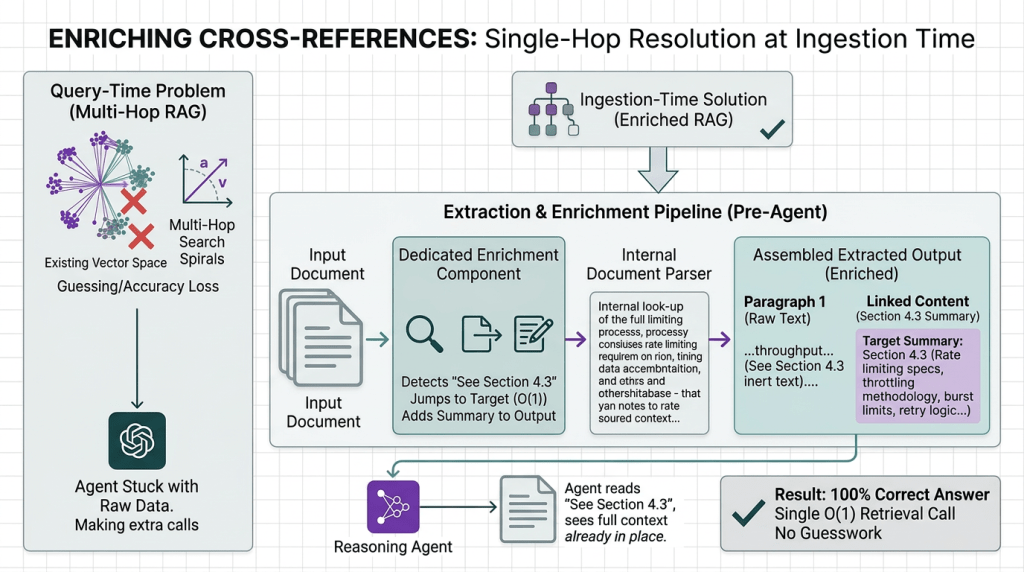

Conventional retrieval-augmented generation treats each chunk as an island. It retrieves text based on semantic similarity and hands the LLM a flat pile of fragments. Cross-references, the very mechanism authors use to connect ideas across a document, are invisible to the system. The footnote exists somewhere in the vector database. But the agent has no idea it is connected to the figure you just asked about.

This article explains why cross-reference resolution is a first-class problem in production RAG, why vector search cannot solve it structurally, and how Kudra’s enrichment gives agentic systems the ability to follow references the way a human expert would.

Why Cross-References Break Conventional RAG

Cross-references are deliberate navigational signals placed by authors to connect information that belongs together but cannot occupy the same physical location. In any complex document, they appear constantly:

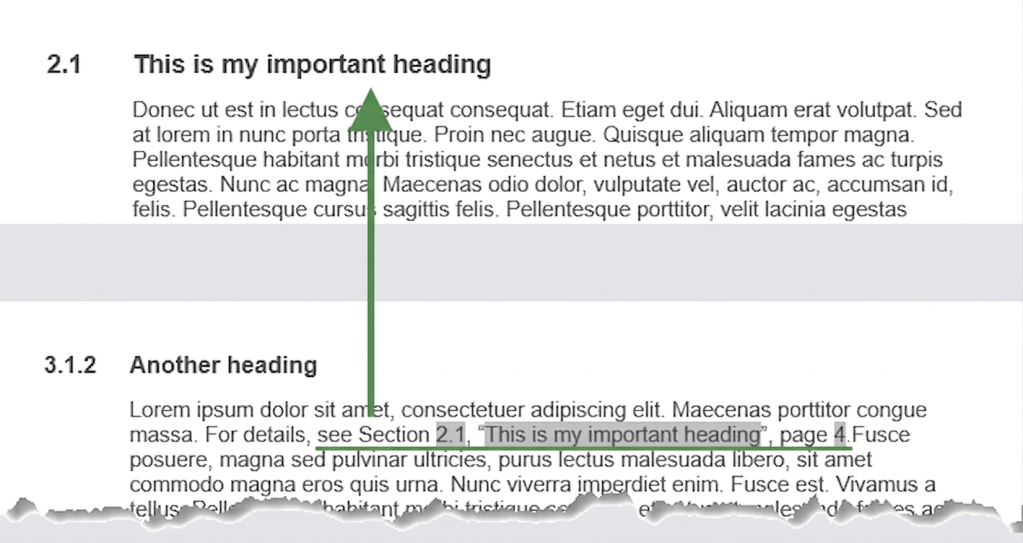

When a human reads one of these signals, they navigate to the referenced location, read it in context, and return with enriched understanding. The answer to the original question now includes the referenced content.

Vector RAG cannot do this. Here is why:

The Chunking Problem

When a document is chunked into 512-token fragments and embedded, cross-reference signals are severed from their targets. The text “See Section 4.3” lands in one chunk. Section 4.3 lands in a different chunk, possibly on a completely different embedding cluster because its language does not semantically resemble the paragraph that cites it.

Similarity search between these two chunks will return a low score. “Maximum throughput” and “Rate Limiting” share some vocabulary, but if the query is “What is the request limit?”, the system may retrieve Chunk 847 and miss Chunk 1203 entirely — or retrieve both without understanding they are causally connected.

The LLM then produces an answer using only the top-line number, missing the throttling methodology, the burst limit logic, and the retry behavior buried three paragraphs into Section 4.3.

The Cascading Reference Problem

Real documents do not have single-hop references. They have chains.

A configuration parameter cites a defaults section. The defaults section references an environment variable spec. The env var spec links to a deployment appendix. Each hop requires the system to navigate to a new location and return with enriched context.

Vector RAG handles one hop poorly. It handles chains catastrophically. Each jump requires a new retrieval call with a reformulated query, and there is no mechanism to track where you started or why you are looking.

The result is agents that either stop at the first reference (incomplete answer) or spiral into repeated retrieval calls that burn tokens without converging on an answer.

What Cross-Reference Resolution Actually Requires

Following a reference correctly requires three capabilities that semantic search does not provide:

- Reference Detection The system must recognize that “See Note 8” is a navigational signal, not just text. It must parse the type of reference (note, schedule, appendix, figure, table) and identify the target identifier.

- Target Location The system must know where the target lives in the document. “Note 8” must map to a specific node in the document structure, not a search query.

- Contextual Retrieval Once located, the system must retrieve the referenced content with its full structural context: the section it belongs to, the subsections it contains, the tables it references, and any further cross-references it introduces.

Vector search solves none of these structurally. It can approximate reference detection if the embedding model is strong enough, but location and contextual retrieval require explicit document structure.

The Solution: Enriching Cross-References at Extraction Time

The core insight is simple: cross-reference resolution should not be the agent’s problem. It should be solved before the agent ever sees the data — during extraction, when the full document is still available.

Instead of handing the agent raw text and hoping semantic search connects the dots at query time, you enrich the extracted output at ingestion time. When a section says “See Section 4.3,” the extraction pipeline doesn’t just preserve that signal as inert text. A dedicated enrichment component detects it, identifies what Section 4.3 is about, and adds a summary of that linked content directly into the extraction output, alongside the original text.

The agent retrieves the document. The extracted output already tells it what each referenced section contains. No extra retrieval calls. No guessing. No multi-hop search spirals.

Building Cross-Reference-Aware Extraction with Kudra

Kudra is a workflow builder for document extraction. You assemble a pipeline of processing components on a canvas, each one responsible for a specific enrichment task, and Kudra runs every document through that pipeline automatically. The result is a JSON output containing the full extracted document text, enriched with structured metadata about linked sections so the information is complete from the moment it lands in your system.

Cross-reference resolution is not something you wire up separately. It is a component you add to the workflow.

Step 1: Build Your Workflow

No custom code. Drag and drop the components, configure the enrichment prompts, and Kudra handles the rest. The cross-reference enrichment component uses generative AI to understand what each reference signal points to and what the target content is about — producing output that makes the connection explicit rather than leaving it implicit in the raw text.

Step 2: Create a Project and Upload Your Documents

Create a project in Kudra and upload your documents. Kudra runs every document through the workflow automatically and lets you inspect the enriched JSON output before you write a single line of agent code.

This inspection step matters more than most teams expect. You can see exactly what was extracted and how references were enriched: which reference signals were detected, what summaries were generated for linked sections, whether the enrichment captured the right context. If a reference to “Appendix C, Table 7” was detected but the linked summary is too thin to be useful, you adjust the enrichment component and re-run, before it becomes a silent failure downstream.

Step 3: Chunk with Confidence, Linked Context Travels with Every Chunk

Once extraction quality looks right, you can chunk the enriched output and load it into your vector store as you normally would, with one critical difference.

Because the linked section summaries are embedded directly in the JSON alongside the source text, they chunk together. When your agent retrieves the top-k results for a query, the chunk that mentions “See Section 4.3” carries the summary of what Section 4.3 contains right alongside it. The referenced content is already there. It does not need to be separately retrieved, and it does not need to score well on its own against the query.

This is what makes hallucination on cross-references a solvable problem. The agent is no longer working from an incomplete fragment that trails off into a reference signal. It has the source text, the reference, and a precise summary of what the reference points to, all in the same retrieved chunk. The top-k results are complete by construction, not by luck of the similarity draw.

You wire this into your agent the same way you would any retrieval pipeline. Generate an API key from the project settings, call the workflow endpoint, chunk the enriched output, and index it. The improvement is in what you’re chunking, not in how you chunk it.

Final Thoughts

Cross-reference resolution is not a retrieval problem. It is a data completeness problem. A chunk that says “See Section 4.3” and a chunk that contains Section 4.3 are strangers in a vector store, similarity search cannot reliably reunite them, and the agent has no way to know what it is missing.

The fix is to complete the information at extraction time. Enrich your output so that reference signals carry the context they point to before the data ever reaches your vector store. When your chunks are complete, your top-k results are complete. And when your top-k results are complete, your agent stops hallucinating on the gaps.

Solve the extraction. Everything downstream gets better.